The frightening terrorist attack in Christchurch, New Zealand, occurred on March 15th, has cast a spotlight to social media platforms, where the shooting was streamed live, and instantly reverberated world-widely through social media. Less than a month after that, Australia government passed the Criminal Code Amendment (Sharing of Abhorrent Violent Material) Act 2019 requiring social media platforms and Internet service providers take the responsibility of a better Internet environment without “abhorrent content”.

Australia’s attorney-general Christian Porter said on the second reading of the bill that the act shows that “Australian government expects the providers of online content and hosting services to take responsibility for the use of their platforms to share abhorrent violent material” (Parliament of Australia, April 4th, 2019).

What is the law?

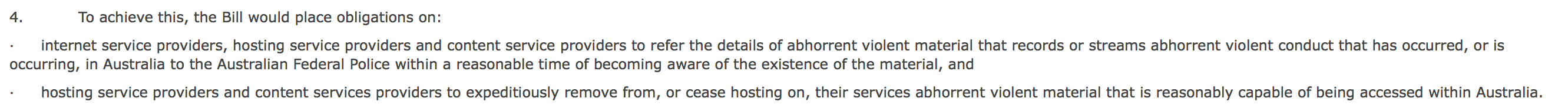

The new law introduces two new offences. The first one applies to internet service providers, hosting service providers and content service providers. They need to report the detail information of abhorrent violent material that “has occurred, or is occurring” in Australia to the Australian Federal Police (AFC) within “a reasonable time”. The fines are up to A$168,000 for an individual or A$840,000 for a corporation.

The second offence requires hosting service providers and content services providers to “expeditiously remove” abhorrent violent material that is capable of being accessed within Australia. Otherwise, the Internet servicer or social media platforms will face 3 years’ imprisonment or fines up to 10% of the platform’s annual turnover.

Worldwide situation

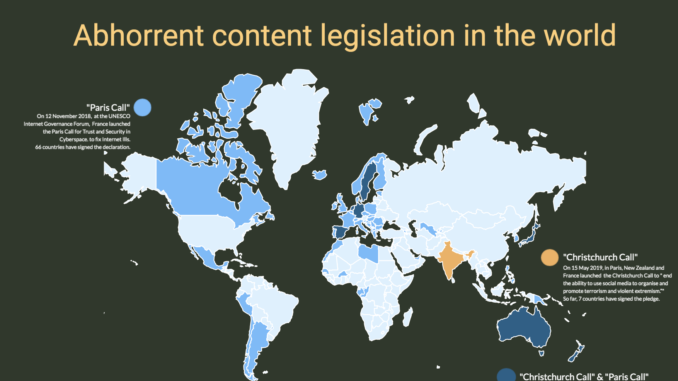

Australia is not the first country that the government decides to tackle online extremism. At the end of last year, Emmanuel Macron, French president, launched the Paris Call, at the Internet Governance Forum of The United Nations Educational, Scientific and Cultural Organization, to fix Internet illnesses, asking for states working together, as well as collaborating with private-sector partners, the world of research and civil society to prevent cybercrimes including Internet extremism. 66 states including Australia, Argentina, Canada and many European countries signed.

Moreover, Jacinda Ardern, Prime Minister of New Zealand, and Emmanuel Macron held the Christchurch Call last month, to “eliminate terrorist and violent extremist content online”. 17 countries including Australia, Canada, Germany, India and the United Kingdom signed the non-binding agreement.

Made with Visme Infographic Maker

Although it is a worldwide trend to solve the Internet problems, the action of Australia government passing the Act 2019 still seems to be rush.

Procedure issues

The Law Council of Australia has also warned: “proposed amendments to criminal legislation to deal with the live streaming of violent material on social media could have serious unintended consequences and should not be rushed through the parliament.”

The Act passed without any chance for debate, where the Labor opposition could have a chance to vote against it, let alone public consultation.

Evelyn Douek, S.J.D candidate of Harvard Law School, and former clerk in the Chief Justice of the High Court of Australia, said: “If the government had taken more time to consult more widely, they would’ve had more opportunity to hear from tech companies, freedom of expression advocates and tech policy academics who would’ve alerted them to the possible harmful side-effects of the legislation.”

She expressed her worries towards “some of the worst problems, such as vagueness, asking the impossible, targeting the wrong layers of the internet stack, or incentivizing over-censoring by platforms”.

Technical issues

At the current level, the technology of moderate video is still a huge challenge, thus reduce the enforceability of the legislation.

Right after the Christchurch shooting, YouTube tried to train their machine-learning programs to identify abhorrent videos. But it failed, not to mention the speed of identifying cannot even catch up the speed of uploading and resharing. It had to take down all related videos.

Microsoft’s Content Moderator API can be seen as another example. According to Robert Merkel, lecturer in Software Engineering, Monash University, the API cannot automatically classify a video as “abhorrent violent content”, nor automatically identify videos similar to another video.

With the current under-developed video moderation technologies, if the government forces social media platforms or Internet servicers to take down and report abhorrent content in a compulsive and punitive way, that is through laws and punishment, the awkward situation in Germany might happen again in Australia.

According to DW (Jan 15, 2018), Germany’s new network enforcement law passed at the end of 2017, known as NetzDG, requires social media platforms to quickly remove offensive and illegal material. However, satirical or humorous content on Facebook and Instagram were also censored. One image of a bikini top covering a traffic sign alerting drivers to speed bumps photographed by German street artist “Barbara” were removed from the platforms.

“This illustrates the problems that crop up when service providers take such decisions on tight deadlines, especially with difficult evaluative questions, such as how to treat satire,” Petra Sitte of the Left Party told DW.

Implementation issues

Although in the last month, Prime Minister Scott Morrison’s government tried to patch and develop the act through declaring a new plan requiring social media platforms giving transparency reports on the number, type, and complaints about illegal, abusive, and predatory content by their users. It still doesn’t change the ambiguity and inoperability of the Act 2019.

The term “expeditiously” is not defined in the law.

General Potter might give a hint in his second reading speech. He said: “the relevant footage was broadcast for 17 minutes without interruption and it was another 12 minutes after that point in time that the first user report on the original video was received by Facebook. The material was live-streamed on Facebook and available on that platform for almost an hour and 10 minutes until the first attempts were made to take it down. Simply put, we find that unacceptable.”

According to Ms Douek, this suggests that “expeditiously remove” may be defined by hours. However, it is much less than even the 24-hour period defined by NetzDG.

Meanwhile, the applicable objects of the Act are also obscure.

Are these social media platforms and Internet servicers Australian companies, or those having businesses in the Australian market? Will those executives of multinational social media giants really go into the Australian jail as lawmakers from nine countries could not even get Mark Zuckerberg to appear for hearings in the U.K. last year?

There are a lot of questions needed to be solved before implementing the Act. The new law will lose its enforceability with such a rush procedure of making it. How do you think?

References

DW. (January 15th, 2018). Facebook slammed for censoring German street artist. Retrieved from https://www.dw.com/en/facebook-slammed-for-censoring-german-street-artist/a-42155218

Evelyn Douek. (April 10, 2019). Australia’s New Social Media Law Is a Mess. Retrieved from https://www.lawfareblog.com/australias-new-social-media-law-mess

Parliament of Australia. (April 4th, 2019). House Hansard of Criminal Code Amendment (Sharing of Abhorrent Violent Material) Bill 2019, Second Reading. Retrieved from https://parlinfo.aph.gov.au/parlInfo/search/display/display.w3p;query=Id%3A%22chamber%2Fhansardr%2F84457b57-5639-432a-b4df-68b704cb3563%2F0032%22

Robert Merkel. (April 2, 2019). Livestreaming terror is abhorrent – but is more rushed legislation the answer? Retrieved from https://theconversation.com/livestreaming-terror-is-abhorrent-but-is-more-rushed-legislation-the-answer-114620

Be the first to comment